That fake news is a tricky problem to solve, is probably not news to anyone at this point. However, the problem stands to get a lot trickier once the fakesters open their eyes to the potential of a mostly untapped weapon: trust in videos.

Fake news so far has relied on social media bubbles and textual misinformation with the odd photoshopped picture thrown in here and there. This has meant that, by and large, curious individuals have been able to uncover fakes with some investigation.

This could soon change. You see, “pics or it didn't happen” isn't just a meme, it is the mental model by which people judge the veracity of a piece of information on the Internet. What happens when the fakesters are able to create forgeries that even a keen eye cannot distinguish? How do we distinguish truth from fact?

We are far closer to this future than many realise. In 2017, researchers created a tool that produced realistic looking video clips of Barack Obama saying things he has never been recorded saying. Since then, a barrage of similar tools have become available; an equally worrying, if slightly tangential, trend is the rise of fake pornographic video that superimpose images of celebrities on to adult videos.

These tools represent the latest weapons in the arsenal of fake news creators – ones far easier to use for the layman than those before. While the videos produced by these tools may not presently stand up to scrutiny by forensics experts, they are already good enough to fool a casual viewer and are only getting better. The end result is that creating a good-enough fake video is now a trivial matter.

There are, of course, more traditional ways of creating fake videos as well. The White House was caught using the oldest trick in the book while trying to justify the barring of a reporter from the briefing room: they sped up the video to make it look like the reporter was physically rough with a staff member.

Other traditional ways are misleadingly editing videos to leave out critical context (as in the Planned Parenthood controversy), or splicing video clips to map wrong answers to questions, etc. I expect that we will see an increase in these traditional fake videos before a further transition to the complete fabrications discussed above. Both represent a grave danger to the pursuit of truth.

Major platforms are acutely aware of the issues. Twitter has recently introduced a fact checking feature to label maliciously edited videos in its timeline. YouTube has put disclaimers about the nature of news organizations below their videos (for example, whether it is a government sponsored news organization or not). Facebook has certified fact checkers who may label viral stories as misleading.

However, these approaches rely on manual verification and by the time a story catches the attention of a fact checker, it has already been seen by millions. YouTube’s approach is particularly lacking since it doesn’t say anything about an individual video at all, only about the source of funding of a very small set of channels.

Now, forensically detecting forgeries in videos is a deeply researched field with work dating back decades. There are many artefacts that are left behind when someone edits a video: the compression looks weird, the shadows may jump in odd patterns, the shapes of objects might get distorted.

There are numerous ways to detect these varieties of oddities. However, this does not solve the problem at hand for two reasons

1. No one has combined all the detection measures into one automatic tool (which does not also give too many false positives).

2. Because editing is an expected part of video production – all videos go through compression and editorial changes, fake news or otherwise.

The second point there is the real killer. Detecting that an edit has happened doesn’t help much when every video is edited. The thing we want to actually detect is malicious edits – edits designed to mislead the viewer. This is a hard problem. What is malicious is, to some degree, subjective and therefore automated solutions won’t work. This fundamental issue has stumped researchers for the last few years.

A technical remedy for deep fake videos

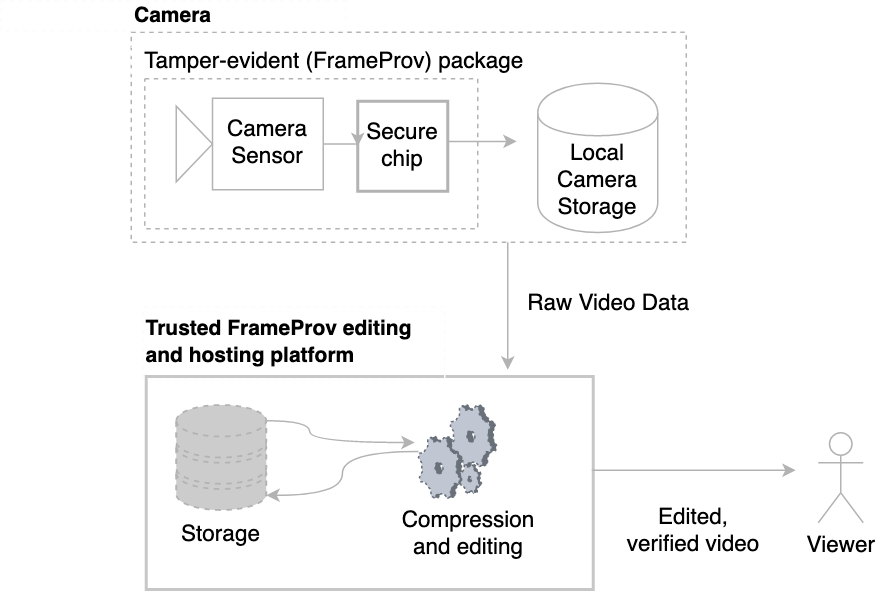

I would like to propose a solution. Instead of trying to detect malice, we should simply allow honest editors to declare the edits they’ve done. This then becomes a tractable problem: show the user every declared edit and if there are any undeclared edits, warn the user that the video has been misleadingly edited. This gives the viewer insight into the raw, true video as well as all the edits made in the production of the video. I call this system FrameProv (for Provenance at the Frame level) and I believe that if this system becomes widely used, we will finally have an effective weapon against the scourge of fake news video.

At the heart of FrameProv lies a novel data fingerprint that keeps track of each frame as it leaves the camera’s sensor. Any changes made to the frames from this point onwards would immediately destroy the fingerprint thus making it obvious that an edit has taken place. We then provide video editors with a language to specify edits they want to make. The list of edits they make are then attributed to the editor’s credentials. In this way, the editor can claim both responsibility and credit for the edits they’ve done. Only if all editors that worked on a particular video make this assertion and release it to the public will the FrameProv system accredit the video.

Moreover, even in cases where there are no undeclared edits, we still give viewers the option to inspect all the declared edits. In this way, viewers have a way to take control over the information they receive and make up their own minds about whether to trust it or not, as opposed to just trusting a news organisation (or a Twitter handle).

We expect FrameProv to be initially used by news organizations who have a strong interest in proving the validity of their claims. The second avenue where we expect to see adoption is in CCTV cameras: if a piece of footage is going to be used in the court of law then it ought to be able to prove its provenance. On the viewing side, all the users need is a browser extension which downloads the raw data fingerprints and compares them to the displayed video.

Whilst all viewers might not use FrameProv to investigate suspect edits, more dedicated users would be able to quickly root out cheats and flag fake video for others. If video hosting platforms start integrating the verification script into their video player itself then FrameProv validation would become the default rather than the exception thus effectively closing the door on fake videos.

These are our initial steps against fake news but there’s plenty of research and engineering that needs to be done to make FrameProv a true force for good.

We have published our initial design specification and goals here: https://www.nspw.org/2019/accepted You can follow FrameProv’s progress, and inspect the source code here: https://github.com/Mansoor-AR/FrameProv