Data is at work in every part of your life.

Retailers, governments, employers and more see it as a critical part of knowing how you tick and controlling interactions with you.

For better and worse it’s happening everywhere so we better get wise to how it actually happens.

yourData is openDemocracy’s project to promote data transparency. We explore ways to show people how their data is used by data-driven websites. If a website shows me an article, or tries to sell me a product, or manages my access to government services, I want to know if and how they’re using my data (like my current location, my gender, or my race) to make that decision. That decision is called personalisation and it’s a filter that you typically have little knowledge of or control over.

yourData tells our readers if they are viewing an automatically personalised page and shows them what data shaped that decision. We make this information available at the exact moment that they get the personalised content, not far away in a settings page or elsewhere.

There are many ways that this kind of transparency feature can lead to a more informed web experience. We are in conversation with technologists, designers, ethicists, readers and others who think about these questions.

So we asked some of them to share their ideas about how we might develop these features and how they would like to see transparency advanced more widely.

The answers are a mixture of design insights and broader reflections on the politics of data. Some of them propose thoughtful developments to our initial designs, others present fundamental objections to our approach. These ideas will inform our thinking as we develop our designs and find ways to build a more transparent web.

We thank the respondents for their opinion and expertise.

More submissions will be published in future so please send us your own ideas.

Laura Kalbag is Co-Founder of the Small Technology Foundation.

What changes do you think would improve the yourData feature as it currently stands? Or what about personalisation technologies more generally?

It’s important to understand how personalisation works, the data that a business can obtain about you, and how they build profiles about you to better target you with ads and other types of content. However, when ads or other targeted content is made “transparent”, businesses will only show you the targeting data they feel comfortable sharing with you, the data that won’t scare you off. Very few of us are worried about a business knowing our gender, that we live in a particular city or that we’re in a certain age bracket when we tend to share most of that information willingly in social media profiles.

We tend to be less comfortable when we find out the business knows where in a building we live, what time we leave the house and who we’ve slept alongside...

We also don’t just need transparency on the data a business collects about us, but how their algorithms work. An ad will only be shown to a limited number of people – what in the algorithm determines which people? Is it based on our perceived persuadability? The likelihood of us clicking an ad, or some other factor they’ve determined about us?

We also need to know how the data collected about us is otherwise used. Is it combined with data about our friends and family? Is it shared with other businesses who have further data about us? As Aral Balkan says, data about a person is not the same as data about a rock. The data about us, and how it’s used, can have wide-ranging effects on our lives, from our credit scores and insurance to whether a platform decides to show us non-stop ads for political propaganda.

We need to find the means to expose these uses of our data. To expose surveillance capitalism at work. But when we, as people who use technology, are informed about how our data is used to exploit us, it’s unlikely we’ll want to continue using the technology. However, as it has become such an integrated part of our infrastructure and society, we’re often left with little choice. This is why, as valuable as it is to expose the worst of technology, there’s little reassurance unless we have choice. We need legal recourse to punish and prevent exploitative and harmful uses of our data. We need alternative technologies so that people don’t have to choose between participating in the modern world and being exploited. Transparency has little value without choice.

How do you think features like this should be designed – what methodologies or design frameworks? Can we create this with an open-source project? Who should be involved?

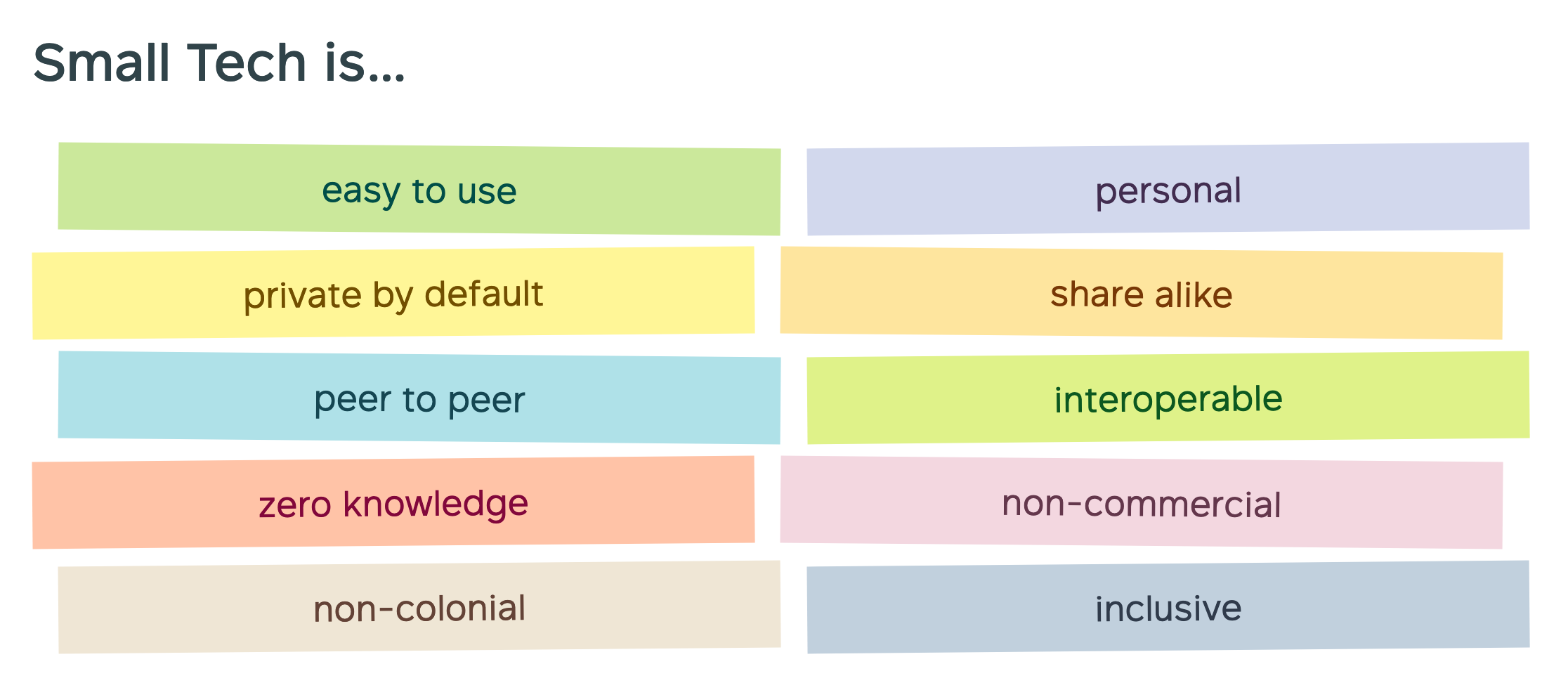

If we are to expose the problems with data collection, we should not participate in the collection of data ourselves. At Small Technology Foundation, we came up with some principles of Small Technology to create everyday tools for everyday people designed to increase human welfare, not corporate profits. The principles which are particularly key in this context are:

Private by default. Do not collect any person’s data by default, technology should work without it. From there, if a person’s data is required for functionality (for example to demonstrate how a website might know their location), it should only be collected and used with the person’s explicit consent. To obtain consent, the person should know exactly what data will be collected, what it will be used for, where it will be stored, and for how long it will be stored.

Zero knowledge. When collecting or using people’s data, no “admin”, developer or host should be able to access that data. The system should be end-to-end encrypted, or peer-to-peer with the person’s data only stored on their device.

Easy to use and inclusive. Understanding how our data can be used against us, and protecting ourselves from exploitation, should not require a lot of technical knowledge, privilege, money and time. When we’re building tools for people to understand how their data is used, they must be accessible (particularly to disabled people and people using assistive technologies) and easily available for everyone to use regardless of their technical knowledge.

Who currently decides how personalisation technology develops online? What organisational forms or civil society activity can help do this better?

Personalisation technology is currently developed by those who seek to exploit people’s personal data for their financial gain. Often it’s hidden behind claims of being necessary for their business model (without question about whether that business model should exist) or “to improve your experience” of their technology. Civil society needs to understand that businesses building these technologies have no interest in stopping their exploitative practices but a lot of interest in publicity that makes them appear to care about society, democracy, privacy and ethics.

Civil society needs to put less effort into trying to change the minds behind Big Tech, and instead campaign to inform upon and expose their harmful practices, develop regulation to prevent the worst harms, and provide support for alternative technology to flourish.

As a designer/developer, I see most of my power in being to influence other people who do similar work to me because that’s where I’ve got both access and experience. Though having a technical background is also useful for calling out nonsense in a business setting.

Arnout Terpstra is a privacy researcher who has argued that UX friction is key to reflective thinking and the ability of citizens to engage meaningfully.

From a design perspective (where design means shaping interfaces and information in such a way that individuals are able to use and understand it), I think popups and text are not the best approach – it will quickly lead to an information overload and popup fatigue e.g. the cookie popups on basically all websites nowadays. Furthermore, people will quickly learn how to circumvent such 'transparency notices' in order to get to where they want to be. Especially if the button to continue (with the default options) is coloured green and other options (often the more privacy friendly ones) are barely visible.

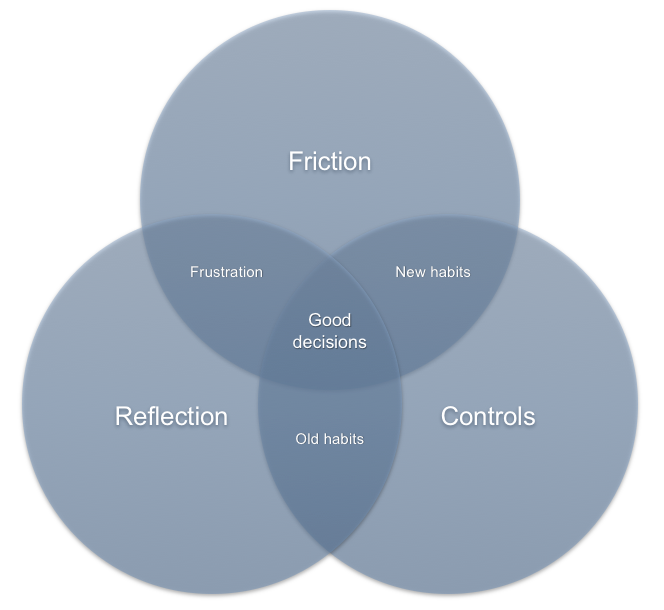

No wonder many interactions within interfaces become mindless. Designers could change this, by designing interfaces that specifically use 'friction' to catch the user's attention. Once caught, designers could help the user reflect on privacy and privacy decisions, e.g. by providing information into what caused the friction. However, do we already know what information people are actually interested in i.e. which aspect(s) of personalisation do they want to know and what actually helps them reflect? What type of medium works best? From a legal perspective, data controllers must provide transparency. Does this mean they are obligated to find out how to design according to this for themselves?

An example of the direction I’m arguing for is Project Alias, by Bjørn Karmann and Tore Knudsen. They built an add-on (“parasite”) for the Google Home speaker, which gives users more control over their speaker.

In a way, they used friction to make the device less user-friendly, since first installing a separate app and training the device to respond to your own catch phrase is more work. Also, the device is physically turned off when you’re not using it, which could lead to slower response times. This means more time to reflect on, for example, how the device handles your data.

Although I'm a big fan of the GDPR, I fear there is a serious risk that whatever was meant with transparency, it will end up becoming something similar to privacy policies (factual textual documents which are legally binding in case of a dispute but which nobody actually reads nor understands) or cookie popups (again, factual information but not something users actually engage with). In order to provide meaningful transparency, I think there is plenty of room for new and creative solutions - and designers have a big role to play here.

Claudio Agosti is from Tracking Exposed which analyzes evidence of algorithms on Facebook, YouTube and Pornhub.

We tend to think that by explaining surveillance capitalism to the masses we can alter their use of technology. The concept of "internet exploitation" is an advanced abstraction in human thinking. However, it is a matter of public digital-health. Since reaching a critical mass is key to affecting how social media platforms function, we should consider focusing on empowering the masses and not only communicating with highly literate readers— instead of concentrating on explaining how and why, we should focus on showing what.

With the yourData project, there are three directions we would like to brainstorm along:

1) develop visualizations and interactions to explain algorithms. Because they are so complicated but impact our everyday lives, I expect that with the proper visualization, a larger portion of society would become outraged like us and more interested in finding solutions.

2) run a collaborative experiment. We twice tried to organize a group of people to record their personalization. Comparing how similar people get personalized suggestions is enough to create clusters and discuss how YouTube sees this community. This is about analyzing social media algorithms, not people. Such experiments require planning, campaigning, and specialist methodologies to produce data and analysis.

3) interview digital activists of various distinctions. Policy lobbyists, support groups for vulnerable people, law firms, and organizations that encourage citizens to reclaim their digital rights. There are many out there and openDemocracy can highlight their challenges!

Rich Lott is an open-source advocate, works with openDemocracy and is active in the CiviCRM community.

I think some standards-based approach to telling users what content has been customised based on data is really good. To me:

- Using html attributes on an element that contains personalised content is the way forward since it means any technology can implement it and any tech can then present it.

- The data contained in the attributes should say what type of information was used and the source, but not the actual data used unless that can be done securely i.e. authenticated user who knows their data will be revealed.

- People have the right to view data held on them and to have it corrected or deleted, so perhaps the standard could include a method for them to request this e.g. some data attributes that identify the responsible body and how to contact them, which could be a tech based solution or simply an email address/phone number.

- Users should be able to disable personalisation with a single click. I would support use of the DoNotTrack header for this, but then that's never been implemented in any concrete way, and I suppose users might value customisation of content on certain sites but not others, so maybe a cookie (which, ha, they'd have to consent to).

- Personally I would prefer to opt in to personalised content instead of opt out of it.

These thoughtful responses show the range of considerations involved in ethical personalisation. Our own experiments with these technologies will seek to blend best practice which is a delicate balance.

It will involve using systems that provide readers with relevant, customised content that helps them find what they want efficiently amongst the mountains of content online.

At the same time, we'll want to find ways to offer them choice, visibility and control. This will involve trialing different systems, iterating to find the best of all worlds, and doing that in an open-source fashion where possible.

We invite readers like you to share ideas for how yourData and similar technologies could be designed.

Please get in touch, or make contributions in code directly to the GitHub repository, which includes the draft yourData white paper, or the CodePen design page.

The code and graphics there can be altered or used as a springboard for your suggestions.